Introduction

In the relentlessly evolving landscape of software engineering, the year 2026 presents a paradox: unprecedented technological capability coupled with an alarming rate of project failure and escalating technical debt. A recent, albeit anonymized, industry report from a leading technology consultancy indicated that nearly 70% of enterprise software initiatives launched between 2023 and 2025 either failed to meet their objectives, exceeded budget by over 50%, or were significantly delayed, primarily due to architectural shortcomings and an inability to adapt to changing requirements. This statistic underscores a critical, often unspoken, problem: while foundational software design principles are widely taught, the application of truly advanced, strategic architectural patterns remains a specialized, often elusive, skill.

The core problem this article addresses is the widening chasm between theoretical knowledge of elementary software design patterns and the practical mastery required to engineer resilient, scalable, and maintainable systems in complex, distributed environments. Senior technology professionals, architects, and lead engineers often find themselves grappling with the inherent complexity of modern systems—cloud-native deployments, real-time data processing, pervasive AI integration, and stringent security and compliance mandates—without a definitive, actionable framework for advanced architectural decision-making. The opportunity, therefore, lies in equipping these leaders with a deep understanding of essential advanced patterns that transcend mere coding constructs, instead offering blueprints for organizational and system-wide success.

Our central argument, the unique value proposition of this article, is that the strategic adoption of software design patterns beyond the foundational Gang of Four repertoire is not merely an optimization but a fundamental necessity for navigating the challenges and seizing the opportunities of the modern digital economy. This handbook distills decades of collective industry and academic experience into a definitive guide focusing on five essential advanced patterns: Domain-Driven Design (DDD) with Tactical Patterns, Clean Architecture, Event-Driven Architecture (EDA) with Event Sourcing and CQRS, Microservices with the Distributed Saga Pattern, and the Strangler Fig Application Pattern. These patterns, when applied judiciously, empower organizations to build systems that are not only robust and performant but also adaptable, allowing for continuous evolution in a hyper-competitive market.

This article embarks on an exhaustive journey, starting with the historical genesis of design patterns and progressing through foundational theories, the current technological landscape, and rigorous selection frameworks. We will delve into detailed implementation methodologies, best practices, and common pitfalls. Real-world case studies will illustrate practical applications, followed by deep dives into performance, security, scalability, and DevOps integration. Crucially, we will explore the organizational impact, cost management, and ethical considerations inherent in modern software development. While this guide aims for comprehensiveness in advanced patterns, it will not delve into basic language-specific syntax, elementary data structures, or introductory object-oriented programming concepts, assuming the reader possesses a solid foundational understanding of software development principles.

The relevance of this topic in 2026-2027 cannot be overstated. With the acceleration of digital transformation initiatives, the proliferation of sophisticated cyber threats, the imperative for real-time analytics, and the increasing demand for sustainable and ethically sound technology solutions, organizations require architectures that are inherently flexible and resilient. The patterns discussed herein are directly applicable to addressing these market shifts, enabling enterprises to build future-proof systems that can integrate emerging technologies like quantum computing readiness, advanced AI models, and next-generation blockchain applications, thereby securing a competitive edge in an increasingly complex digital ecosystem.

Historical Context and Evolution

To appreciate the significance of advanced software design patterns, one must first understand the historical trajectory of software development, a journey marked by recurring challenges and evolving solutions. The evolution of software architecture is a testament to humanity's continuous struggle with complexity and change.

The Pre-Digital Era

Before the widespread adoption of high-level programming languages and sophisticated operating systems, software development was largely an artisanal craft. In the pre-digital era, or more accurately, the early digital era (1940s-1960s), programming involved direct interaction with machine hardware, often using assembly language or even wiring circuit boards. Applications were monolithic by necessity, designed for specific, tightly coupled hardware. Concepts of modularity were primitive, often limited to subroutine calls within a single, linear program. Maintenance was arduous, and extensibility was an afterthought, leading to fragile systems that were notoriously difficult to modify.

The Founding Fathers/Milestones

The seeds of modern software engineering were sown by visionaries who recognized the burgeoning complexity. Edsger W. Dijkstra's seminal work on structured programming in the late 1960s advocated for clear control flow and the avoidance of "GOTO" statements, laying the groundwork for more maintainable code. David Parnas introduced the crucial concept of information hiding in the early 1970s, emphasizing the separation of concerns by encapsulating design decisions that are likely to change. This principle is fundamental to nearly all modern design patterns. Later, in the 1980s, the advent of Object-Oriented Programming (OOP) paradigms, championed by languages like Smalltalk and C++, provided new tools for abstraction, encapsulation, inheritance, and polymorphism, offering powerful mechanisms to manage complexity at the class level. However, a systematic approach to applying these OOP principles for larger-scale architectural problems was still nascent.

The First Wave (1990s-2000s)

The 1990s marked a pivotal moment with the publication of "Design Patterns: Elements of Reusable Object-Oriented Software" by the "Gang of Four" (GoF) – Erich Gamma, Richard Helm, Ralph Johnson, and John Vlissides – in 1994. This book cataloged 23 fundamental design patterns, providing a common vocabulary and proven solutions for recurring problems in object-oriented design. These patterns, such as Singleton, Factory Method, Observer, and Strategy, became the bedrock of software engineering education and practice, significantly improving code readability, reusability, and maintainability. Concurrently, the rise of enterprise application frameworks like J2EE (later Java EE) and .NET introduced broader architectural patterns like Model-View-Controller (MVC) and Data Access Object (DAO), addressing the challenges of building multi-tiered, data-intensive applications. Despite these advancements, systems often remained monolithic, scaling vertically, and suffering from tight coupling between layers, leading to "enterprise spaghetti" architectures that were difficult to evolve.

The Second Wave (2010s)

The 2010s witnessed major paradigm shifts driven by the explosion of the internet, cloud computing, and agile methodologies. Service-Oriented Architecture (SOA) emerged as an attempt to decouple enterprise systems into interoperable services, often facilitated by Enterprise Service Buses (ESBs). This era also saw the rise of NoSQL databases, addressing the scalability limitations of traditional relational databases for certain workloads. Most significantly, the nascent concepts of microservices began to gain traction, advocating for decomposing applications into small, independently deployable services. This shift promised greater agility, resilience, and scalability, moving away from monolithic deployments. Accompanying this was the DevOps movement, emphasizing collaboration and automation across development and operations to facilitate continuous integration and continuous delivery (CI/CD). While transformative, this wave also introduced new complexities: distributed transaction management, service discovery, increased operational overhead, and challenges in maintaining data consistency across multiple services.

The Modern Era (2020-2026)

The current state-of-the-art in software engineering is characterized by an intensified focus on cloud-native architectures, serverless computing, event-driven paradigms, and the pervasive integration of AI/ML. Systems are expected to be highly resilient, globally distributed, observable, and adaptable to real-time changes. Concepts like "Platform Engineering" are gaining prominence, aiming to provide self-service capabilities to development teams. Security is no longer an afterthought but a fundamental design consideration, with "security by design" becoming a mantra. The challenge has shifted from merely breaking down monoliths to effectively managing the complexity of distributed systems, ensuring data integrity, achieving true observability, and fostering rapid innovation while maintaining operational stability. This era demands advanced architectural thinking, moving beyond component-level patterns to system-level strategies that address scale, resilience, and the inherent uncertainty of modern business environments.

Key Lessons from Past Implementations

History offers invaluable lessons. The failures of past implementations often stemmed from an underestimation of complexity, particularly regarding distributed systems, and a lack of foresight regarding evolving business requirements. We learned that tight coupling, whether between modules, layers, or services, invariably leads to fragility and inhibits change. The "big bang" approach to enterprise software development proved consistently problematic, highlighting the need for iterative, evolutionary design. Successful implementations, conversely, emphasized modularity, clear separation of concerns, robust error handling, and the ability to adapt. The importance of a common vocabulary, first established by the GoF patterns, remains crucial for effective communication within and across development teams, extending now to architectural patterns that define system boundaries and interaction models. Ultimately, the past teaches us that software architecture is not a static blueprint but a living entity that must evolve, and this evolution is best guided by proven, advanced patterns.

Fundamental Concepts and Theoretical Frameworks

A rigorous understanding of advanced software patterns necessitates a solid grasp of underlying principles and theoretical constructs. These foundations provide the intellectual scaffolding upon which robust, maintainable, and scalable systems are built, transforming design from an art into a discipline.

Core Terminology

- Software Design Pattern: A reusable solution to a commonly occurring problem within a given context in software design. It is not a finished design that can be directly transformed into code, but a description or template for how to solve a problem that can be used in many different situations.

- Architectural Pattern: A fundamental structural organization schema for software systems. It provides a set of predefined subsystems, specifies their responsibilities, and includes rules and guidelines for organizing the relationships between them. Examples include Microservices, Layered Architecture, and Event-Driven Architecture.

- Anti-Pattern: A common response to a recurring problem that is usually ineffective and may be counterproductive. It describes a solution that initially seems appropriate but leads to bad consequences.

- Coupling: The degree of interdependence between software modules; how closely two modules are connected. High coupling makes systems difficult to change and test.

- Cohesion: The degree to which the elements inside a module belong together. High cohesion indicates that a module's elements are functionally related, performing a single, well-defined task.

- Modularity: The degree to which a system's components can be separated and recombined, often with the idea of "loose coupling and high cohesion."

- Encapsulation: The bundling of data with the methods that operate on that data, or the restricting of direct access to some of an object's components. Prevents external access to internal state.

- Abstraction: The process of hiding complex implementation details and showing only the essential features of an object or system. It focuses on "what it does" rather than "how it does it."

- Resilience: The ability of a system to recover from failures and continue to function, even under adverse conditions. In distributed systems, this often involves fault tolerance and self-healing mechanisms.

- Scalability: The ability of a system to handle a growing amount of work by adding resources (either vertically by upgrading existing resources or horizontally by adding more resources).

- Observability: The measure of how well internal states of a system can be inferred from its external outputs (e.g., logs, metrics, traces). It is crucial for understanding and debugging complex distributed systems.

- Domain Model: An object model of the domain that incorporates both behavior and data. It represents the business logic and rules of a specific problem space.

- Bounded Context: A central pattern in Domain-Driven Design (DDD), defining a logical boundary within which a particular domain model is consistently applied. It helps manage complexity in large domains by breaking them into smaller, coherent sub-domains.

- Event: A significant occurrence or change of state within a system, often immutable and representing something that has happened in the past. Fundamental to event-driven architectures.

- Command: An instruction to perform an action, typically issued to a specific aggregate or service, with the expectation of a state change.

- Query: A request for information from a system, typically not intended to cause a state change.

Theoretical Foundation A: SOLID Principles

The SOLID Principles, an acronym coined by Robert C. Martin (Uncle Bob), represent a set of five fundamental design principles that foster maintainable, flexible, and understandable object-oriented software. These principles are not merely guidelines but rather a logical framework rooted in the mathematical concept of algebraic structures and formal logic, ensuring that components can be safely substituted and extended without breaking existing functionality. Adherence to SOLID principles directly contributes to low coupling and high cohesion, which are prerequisites for applying advanced patterns effectively.

The principles are:

- Single Responsibility Principle (SRP): A class should have only one reason to change. This principle emphasizes high cohesion by ensuring each module or class performs a single, well-defined function. Logically, this minimizes the surface area for defects and simplifies testing.

- Open/Closed Principle (OCP): Software entities (classes, modules, functions, etc.) should be open for extension, but closed for modification. This is crucial for evolutionary design, allowing new functionality to be added without altering existing, tested code, thereby reducing the risk of introducing regressions.

- Liskov Substitution Principle (LSP): Subtypes must be substitutable for their base types without altering the correctness of the program. This principle ensures that inheritance hierarchies are well-formed and that polymorphism behaves as expected, preventing unexpected side effects when using derived classes.

- Interface Segregation Principle (ISP): Clients should not be forced to depend on interfaces they do not use. This advocates for fine-grained interfaces, promoting loose coupling and preventing "fat" interfaces that burden implementers with irrelevant methods.

- Dependency Inversion Principle (DIP): High-level modules should not depend on low-level modules. Both should depend on abstractions. Abstractions should not depend on details. Details should depend on abstractions. This principle is foundational for achieving inversion of control and enabling architectures like Clean Architecture, promoting flexibility and testability by decoupling policy from mechanism.

Theoretical Foundation B: Conway's Law

Melvin Conway's Law, first articulated in 1968, states: "Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure." This seemingly simple observation carries profound implications for software architecture, particularly when considering advanced patterns like Microservices and Domain-Driven Design. Conway's Law posits that the interfaces between components in a system will reflect the communication paths and social boundaries within the organization that built it.

The law highlights that technical architecture is inextricably linked to organizational structure and human communication. For instance, if a large organization is structured into separate teams for frontend, backend, and database, it's highly probable their software system will exhibit corresponding architectural layers, potentially leading to bottlenecks and coordination overhead at the interfaces. Conversely, if an organization aims to build a microservices architecture, Conway's Law suggests that structuring teams around specific business domains (e.g., "Order Management Team," "Customer Profile Team") will naturally lead to microservices that align with those bounded contexts, fostering autonomy and reducing cross-team dependencies. Ignoring Conway's Law often results in friction, communication overheads, and an architecture that fights against the very people meant to build and maintain it, leading to the "Inverse Conway Maneuver" where organizational structure is intentionally designed to promote a desired architectural style.

Conceptual Models and Taxonomies

To effectively reason about complex systems, conceptual models and taxonomies are indispensable. These models provide abstractions that simplify understanding and facilitate communication. For advanced patterns, several conceptual models are critical:

- Layered Architecture Model: This classic model divides a system into horizontal layers, each with a specific role and responsibility, often with stricter dependencies (e.g., presentation layer depends on application layer, which depends on domain layer, which depends on infrastructure layer). While foundational, it can lead to "leaky abstractions" or "layer violations" without careful design. Clean Architecture is a modern evolution of this, emphasizing dependency inversion.

- Bounded Context Map (DDD): A visual representation of how different Bounded Contexts (from Domain-Driven Design) within an enterprise interact. It illustrates the relationships (e.g., upstream/downstream, customer/supplier, shared kernel, anti-corruption layer) between different domain models, crucial for understanding complex enterprise landscapes and planning integrations.

- Event Storming Diagrams: A collaborative, workshop-based technique for quickly exploring complex business domains and identifying events, commands, aggregates, and bounded contexts. Participants use sticky notes to visualize business processes and system behavior, leading to a shared understanding that can directly inform the design of Event-Driven Architectures and Domain-Driven Design implementations.

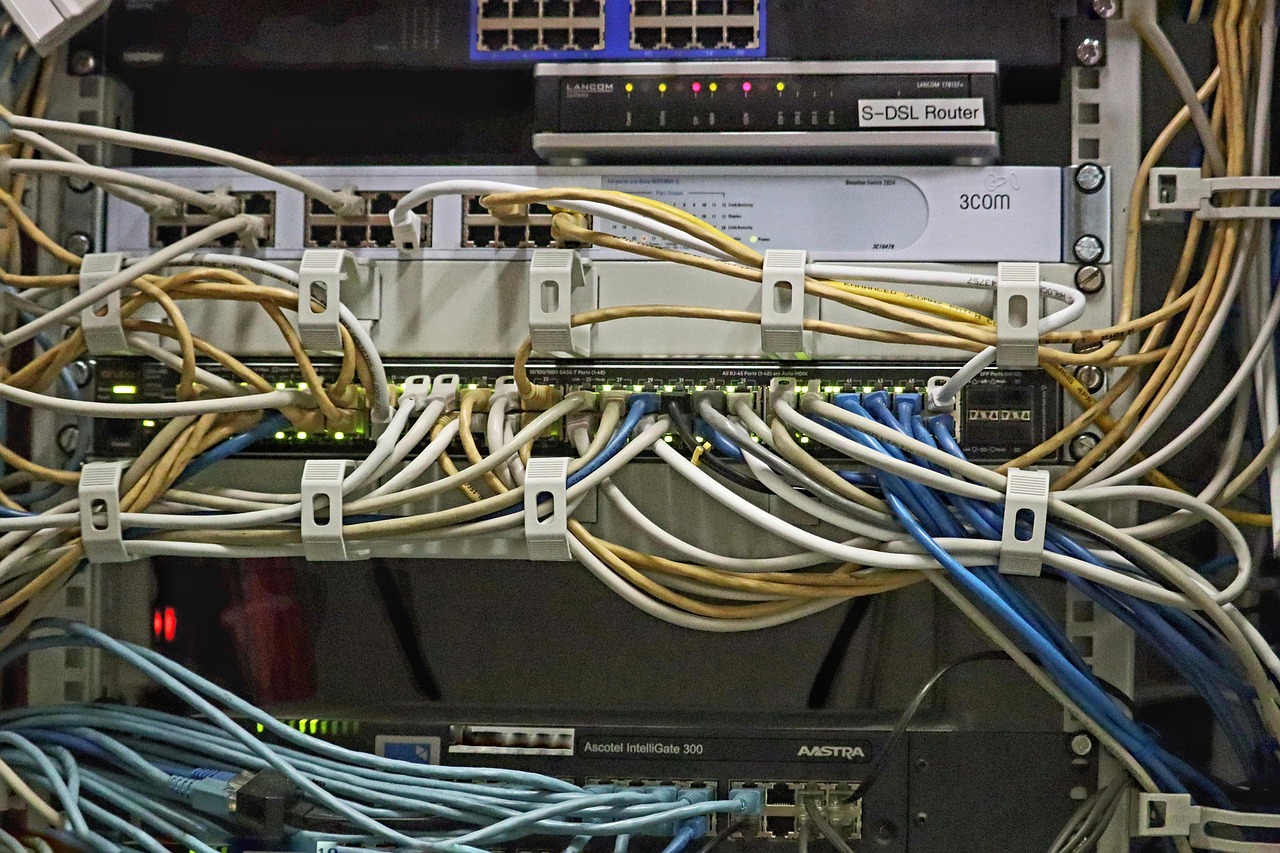

- Distributed System Topology Diagrams: Visual representations of how services interact in a distributed environment, including data flows, API gateways, message brokers, service meshes, and deployment boundaries. These are critical for understanding communication paths, potential failure points, and observability requirements in microservices architectures.

These models are not merely academic exercises; they are practical tools that bridge the gap between abstract concepts and concrete implementations, enabling teams to reason about the implications of their architectural choices.

First Principles Thinking

First principles thinking, a mental model borrowed from physics, involves breaking down complex problems into their fundamental truths and reasoning up from there, rather than reasoning by analogy. Applied to software engineering and advanced design patterns, it means questioning conventional wisdom and asking: "What are the absolute, irreducible elements of this problem?"

For example, instead of asking "Should we use microservices?", a first principles approach might ask:

- What is the fundamental purpose of this system? (e.g., to enable users to make purchases, to process financial transactions).

- What are the core capabilities it must provide? (e.g., order creation, inventory management, payment processing).

- What are the inherent complexities we need to manage? (e.g., concurrent access, data consistency across distributed components, evolving business rules, high throughput, low latency).

- What are the fundamental constraints? (e.g., budget, team size, regulatory compliance, time to market).

The Current Technological Landscape: A Detailed Analysis

The modern software engineering landscape in 2026 is characterized by hyper-connectivity, elastic infrastructure, and an increasing reliance on intelligent automation. Understanding this environment is crucial for appreciating the context in which advanced design patterns operate and for making informed architectural choices.

Market Overview

The global software market continues its exponential growth, projected to exceed $1 trillion by 2027, driven by digital transformation, cloud adoption, AI integration, and the demand for real-time data processing. Major players like Amazon (AWS), Microsoft (Azure), and Google (GCP) dominate the cloud infrastructure space, providing the foundational platforms for modern applications. The ecosystem is vibrant, with continuous innovation in areas such as serverless computing, edge computing, quantum computing research, and AI/ML operationalization (MLOps). This growth fuels a relentless demand for highly skilled software architects and engineers capable of designing and implementing systems that are not only performant but also cost-efficient and resilient against an escalating threat landscape.

Category A Solutions: Cloud-Native Platforms and Services

Cloud-native development has moved beyond a trend to become the default paradigm for many enterprises. This category encompasses the vast array of services offered by major cloud providers designed to build and run scalable applications in the cloud.

- Containerization Technologies: Kubernetes (K8s) remains the de facto standard for container orchestration, enabling declarative management of containerized workloads. Tools like Helm for package management and Istio for service mesh capabilities extend Kubernetes' power, providing sophisticated traffic management, security, and observability for microservices.

- Serverless Computing: Functions-as-a-Service (FaaS) offerings like AWS Lambda, Azure Functions, and Google Cloud Functions continue to gain traction for event-driven workloads, reducing operational overhead and enabling granular scaling. This paradigm strongly aligns with event-driven architecture patterns.

- Managed Databases: Cloud providers offer highly scalable and resilient managed database services for both relational (e.g., Amazon Aurora, Azure SQL Database) and NoSQL (e.g., DynamoDB, Cosmos DB, MongoDB Atlas) databases, abstracting away much of the operational complexity.

- Messaging and Streaming Services: Services like Amazon SQS/SNS, Kafka (managed versions like Confluent Cloud, Amazon MSK), and Google Pub/Sub are foundational for building event-driven systems, enabling asynchronous communication and data streaming at scale.

Category B Solutions: Enterprise Integration & Data Orchestration

As systems become more distributed, the challenges of integration and data orchestration become paramount. This category focuses on solutions that enable seamless communication and data flow across disparate systems.

- API Management Platforms: Solutions like Apigee, AWS API Gateway, and Azure API Management provide a centralized way to design, secure, publish, monitor, and analyze APIs, which are the backbone of microservices communication. They are crucial for implementing patterns like API Gateway.

- Integration Platform as a Service (iPaaS): Platforms like MuleSoft, Dell Boomi, and Workato offer cloud-based solutions for connecting applications, data, and processes across hybrid environments, often featuring visual designers and pre-built connectors.

- Data Streaming and ETL/ELT Tools: Beyond raw messaging, tools like Apache Flink, Apache Spark, and dedicated ETL/ELT platforms (e.g., Informatica, Talend) are critical for processing and transforming data streams, enabling real-time analytics and data synchronization across bounded contexts.

- Workflow Orchestration: Tools like Apache Airflow, Temporal, and AWS Step Functions allow for the definition and execution of complex, long-running business processes, which is essential for implementing patterns like the Distributed Saga.

Category C Solutions: Developer Experience and Platform Engineering

With the rise of complex cloud-native architectures, the focus has shifted towards enhancing developer experience and streamlining the path from code to production. Platform Engineering is an emerging discipline aiming to provide internal developer platforms (IDPs).

- Internal Developer Platforms (IDPs): These are curated sets of tools, services, and processes that provide developers with a self-service experience for building, deploying, and operating applications. They often abstract away infrastructure complexity, allowing developers to focus on business logic.

- GitOps Tools: FluxCD and ArgoCD are popular tools for implementing GitOps, where Git repositories are the single source of truth for declarative infrastructure and application configurations, enabling automated deployments and rollbacks.

- Observability Stacks: Integrated solutions like Datadog, New Relic, Grafana Labs (Loki, Prometheus, Tempo), and OpenTelemetry provide comprehensive monitoring, logging, and tracing capabilities, which are non-negotiable for understanding the behavior of distributed systems and debugging issues.

- Security Scanning and Policy Enforcement: Tools like SonarQube, Snyk, and Open Policy Agent (OPA) are integrated into CI/CD pipelines to ensure code quality, identify vulnerabilities early, and enforce security and compliance policies across the development lifecycle.

Comparative Analysis Matrix

To illustrate the diversity and feature sets within the cloud-native ecosystem, consider a comparative analysis of leading container orchestration and serverless platforms. This table focuses on key criteria relevant to advanced pattern implementation.

Management OverheadFlexibility/ControlCost ModelStartup Time (Cold Start)State ManagementIntegration EcosystemIdeal Use CasesLearning CurveVendor Lock-in RiskObservability| Criterion | Kubernetes (Self-Managed) | AWS EKS / Azure AKS / GCP GKE | AWS Lambda | Azure Functions | Google Cloud Functions |

|---|---|---|---|---|---|

| High (Setup, upgrades, scaling) | Medium (Managed control plane, node management) | Very Low (Fully managed) | Very Low (Fully managed) | Very Low (Fully managed) | |

| Maximal (Full control over every component) | High (Control over nodes, integrations) | Limited (Runtime, memory, triggers) | Limited (Runtime, memory, triggers) | Limited (Runtime, memory, triggers) | |

| Infrastructure cost + operational cost | Service cost + infrastructure cost | Pay-per-execution + duration + memory | Pay-per-execution + duration + memory | Pay-per-execution + duration + memory | |

| Low (Containers always running) | Low (Containers always running) | Variable (Can be high for infrequent calls) | Variable (Can be high for infrequent calls) | Variable (Can be high for infrequent calls) | |

| External databases, in-memory caches | External databases, in-memory caches | External databases, object storage (stateless by design) | External databases, object storage (stateless by design) | External databases, object storage (stateless by design) | |

| Rich (CNCF projects, Istio, Prometheus) | Rich (Native cloud services, Istio, Prometheus) | Deep (AWS services, API Gateway, SQS, SNS) | Deep (Azure services, Event Grid, Service Bus) | Deep (GCP services, Pub/Sub, Cloud Run) | |

| Complex microservices, long-running tasks, stateful apps | Enterprise microservices, hybrid cloud, CI/CD | Event-driven, sporadic workloads, API backends | Event-driven, sporadic workloads, API backends | Event-driven, sporadic workloads, API backends | |

| Very High | High | Medium | Medium | Medium | |

| Low (Open source, portable) | Medium (Managed service features) | High (Proprietary APIs and ecosystem) | High (Proprietary APIs and ecosystem) | High (Proprietary APIs and ecosystem) | |

| Requires external tools (Prometheus, Grafana) | Integrated with cloud monitoring (CloudWatch, Azure Monitor) | Integrated with cloud monitoring (CloudWatch, Azure Monitor) | Integrated with cloud monitoring (CloudWatch, Azure Monitor) | Integrated with cloud monitoring (CloudWatch, Azure Monitor) |

Open Source vs. Commercial

The debate between open-source and commercial solutions continues to shape the technological landscape. Open-source projects, like Kubernetes, Apache Kafka, and Linux, offer transparency, community-driven innovation, and often lower upfront costs. They provide flexibility and avoid vendor lock-in, aligning well with the principles of evolutionary architecture. However, they typically require significant internal expertise for deployment, maintenance, and support, shifting the cost from licensing to operational overhead.

Commercial solutions, on the other hand, provide managed services, dedicated support, and often more polished user interfaces and integrations. Cloud provider offerings (e.g., managed Kubernetes, managed Kafka) bridge this gap by offering commercial support for open-source technologies. The choice between open-source and commercial often hinges on an organization's internal capabilities, risk appetite, and total cost of ownership (TCO) considerations. For advanced patterns, leveraging mature open-source components, often delivered as managed cloud services, strikes a balance between flexibility and operational ease.

Emerging Startups and Disruptors

The innovation pipeline remains robust, with several startups poised to disrupt the market in 2027 and beyond.

- Platform Engineering & Internal Developer Platforms (IDPs): Companies like Backstage (by Spotify, open-source), Humanitec, and Cortex are simplifying the creation and management of IDPs, promising to streamline developer workflows and enforce architectural best practices.

- WebAssembly (Wasm) in the Cloud: Startups like Fermyon and Cosmonic are pushing WebAssembly beyond browsers, envisioning it as a lightweight, secure, and performant runtime for serverless functions and edge computing, potentially challenging current containerization models.

- AI-Native Development Tools: Companies integrating AI directly into the development lifecycle, offering intelligent code completion, automated testing, and even code generation (beyond current LLM capabilities) to accelerate development.

- Decentralized Compute & Edge Computing: Innovations in orchestrating workloads closer to data sources and users, driven by companies building robust platforms for edge AI and distributed ledger technologies.

Selection Frameworks and Decision Criteria

Choosing the right advanced patterns and supporting technologies is a strategic decision with long-term implications. It requires a systematic approach, moving beyond mere technical preference to a holistic evaluation that aligns with business objectives, organizational capabilities, and financial realities.

Business Alignment

The primary driver for any architectural decision must be its alignment with overarching business goals. Technology is a means to an end, not an end in itself.

- Strategic Objectives: Does the chosen pattern facilitate faster time-to-market for new features (e.g., Microservices, EDA)? Does it enable entry into new markets (e.g., global distribution with CDNs)? Does it support a competitive differentiation strategy (e.g., complex domain modeling with DDD)?

- Business Capabilities: Map specific business capabilities (e.g., customer onboarding, order fulfillment, payment processing) to potential architectural boundaries. DDD's Bounded Contexts are particularly useful here. The chosen pattern should enhance, rather than hinder, the evolution of these core capabilities.

- Regulatory and Compliance Requirements: Certain industries (e.g., finance, healthcare) have stringent regulatory demands (GDPR, HIPAA, PCI DSS). The architectural pattern must inherently support compliance, for instance, by enabling data segregation, auditability, and robust security controls.

- Organizational Agility: Does the pattern promote independent team autonomy and faster iteration cycles, aligning with agile principles? Microservices, when implemented correctly, can significantly boost organizational agility, but poorly implemented, they can create a distributed monolith.

Technical Fit Assessment

Evaluating new patterns and technologies against the existing technology stack and organizational technical capabilities is critical to prevent integration headaches and skill gaps.

- Current State Analysis: Conduct a thorough audit of existing systems, technologies, and architectural debt. Is the current monolith a candidate for Strangler Fig? Are existing integration points suitable for an Event-Driven Architecture?

- Compatibility and Interoperability: Assess how new components or patterns will integrate with existing systems. Consider API compatibility, data format consistency, authentication mechanisms, and network protocols. An Anti-Corruption Layer (from DDD) might be necessary to bridge disparate contexts.

- Skillset and Expertise: Does the engineering team possess the necessary skills to implement and maintain the chosen pattern? If not, what is the plan for training and upskilling? Adopting complex patterns like Microservices or Event Sourcing without adequate expertise is a common pitfall.

- Operational Maturity: Does the organization have mature DevOps practices, observability tools, and incident response capabilities? Advanced patterns introduce operational complexity that requires robust practices to manage.

- Non-Functional Requirements (NFRs): Critically evaluate how the pattern addresses NFRs such as performance, scalability, security, reliability, and maintainability. For instance, EDA can significantly improve scalability and resilience, but at the cost of increased eventual consistency challenges.

Total Cost of Ownership (TCO) Analysis

TCO extends beyond initial acquisition costs to encompass all direct and indirect expenses associated with a software system over its entire lifecycle. Advanced patterns, while offering long-term benefits, can have significant hidden costs.

- Development Costs: Initial development effort, learning curve for new patterns/technologies, tooling. Microservices, for example, typically have higher initial development costs due to distributed complexity.

- Operational Costs: Infrastructure (cloud compute, storage, networking), monitoring, logging, alerting, incident response, patching, upgrades. Serverless might reduce compute costs but can increase monitoring complexity and cost at scale.

- Maintenance Costs: Bug fixes, security updates, feature enhancements, technical debt repayment. Well-designed patterns reduce maintenance costs over time, but poorly implemented ones can escalate them.

- Training and Upskilling Costs: Investing in human capital to master new patterns and tools.

- Opportunity Costs: What other initiatives could have been pursued if resources weren't allocated to this choice?

- Compliance Costs: Auditing, reporting, and ensuring adherence to regulatory standards.

ROI Calculation Models

Justifying investment in advanced patterns requires a clear understanding of the Return on Investment (ROI). This involves quantifying both the costs and the benefits, which can be challenging for architectural decisions.

- Direct ROI: Tangible benefits such as reduced infrastructure costs (e.g., optimized resource utilization with serverless), increased revenue from faster feature delivery, or reduced defect rates.

- Indirect ROI: Less tangible but equally important benefits like improved developer productivity, enhanced organizational agility, better talent attraction/retention, reduced business risk, or improved customer satisfaction. These often require proxy metrics.

- Scenario Planning: Model ROI under different scenarios (e.g., high growth, stable market) to understand the financial resilience of the architectural choice.

- Weighted Scoring Models: Assign weights to various business benefits (e.g., 40% for time-to-market, 30% for scalability, 20% for cost reduction) and score different architectural options against these, deriving a comparative ROI score.

Risk Assessment Matrix

Identifying and mitigating potential risks is paramount. A structured risk assessment matrix helps categorize and prioritize risks associated with pattern selection and implementation.

-

Technical Risks: Integration challenges, performance bottlenecks, security vulnerabilities, unproven technology, complexity overload.

- Mitigation: PoCs, expert reviews, phased rollout, robust testing.

-

Organizational Risks: Skill gaps, resistance to change, lack of leadership buy-in, communication breakdowns, team fragmentation.

- Mitigation: Training programs, change management, clear communication, cross-functional teams.

-

Financial Risks: Budget overruns, higher-than-expected TCO, negative ROI.

- Mitigation: Detailed cost modeling, incremental investment, early feedback loops.

-

Market Risks: Technology obsolescence, shifting business requirements, competitor innovation.

- Mitigation: Evolutionary architecture, continuous monitoring of trends, modular design for flexibility.

Proof of Concept Methodology

A well-executed Proof of Concept (PoC) is invaluable for validating assumptions, mitigating risks, and gathering practical experience before committing to a full-scale implementation.

- Define Clear Objectives: What specific questions does the PoC need to answer? (e.g., Can our team implement a Bounded Context using DDD principles within a given timeframe? Can an Event Sourcing store meet our performance requirements?).

- Scope Definition: Keep the PoC scope narrow and focused. It should demonstrate feasibility, not build a production-ready system.

- Success Criteria: Establish measurable success criteria. What constitutes a successful PoC? (e.g., X TPS throughput achieved, Y lines of code demonstrate pattern, Z developers successfully onboarded).

- Limited Resources & Timebox: Allocate dedicated, limited resources and a strict timebox (e.g., 2-4 weeks) to prevent scope creep.

- Document Findings & Lessons Learned: Capture technical challenges, performance metrics, developer feedback, and any unexpected discoveries. This documentation is crucial for informing subsequent architectural decisions.

- Iterate or Pivot: Based on PoC results, decide whether to proceed with the pattern, modify the approach, or abandon it entirely.

Vendor Evaluation Scorecard

When selecting commercial tools or services that support advanced patterns (e.g., managed Kafka, API Gateways, observability platforms), a structured vendor evaluation scorecard ensures objective decision-making.

- Functionality & Feature Set: Does the vendor's offering meet the technical requirements of the pattern? (e.g., does the messaging broker support exactly-once delivery semantics for EDA?).

- Performance & Scalability: Benchmarking results, reported SLAs, and ability to handle projected load.

- Security & Compliance: Certifications (SOC 2, ISO 27001), data encryption, access controls, incident response capabilities.

- Reliability & Support: Uptime guarantees, support channels, response times, escalation procedures.

- Cost & Licensing: Transparent pricing model, long-term costs, flexibility in licensing.

- Integration & Ecosystem: Ease of integration with existing tools, API quality, community support.

- Vendor Viability & Roadmap: Financial stability of the vendor, future roadmap alignment with organizational strategy.

- References & Case Studies: Customer testimonials, real-world success stories.

Implementation Methodologies

The successful adoption of advanced software design patterns is as much about the "how" of implementation as it is about the "what." A structured methodology ensures that complex architectural shifts are managed systematically, iteratively, and with continuous feedback, minimizing disruption and maximizing value.

Phase 0: Discovery and Assessment

Before any significant architectural change, a thorough understanding of the current state and future needs is paramount. This phase lays the groundwork for all subsequent activities.

- Business Capability Mapping: Collaborate with business stakeholders to identify and map core business capabilities, value streams, and domain boundaries. This is crucial for applying Domain-Driven Design principles to define Bounded Contexts.

- Technical Debt Audit: Assess the existing codebase for technical debt, architectural anti-patterns, and areas of high coupling. Identify systems that are critical but brittle, or those that are difficult to evolve.

- Infrastructure and Operations Review: Evaluate current infrastructure (on-prem, cloud, hybrid), deployment pipelines, monitoring capabilities, and operational processes. Determine the organization's readiness for distributed systems and cloud-native paradigms.

- Organizational Readiness Assessment: Evaluate team skills, existing agile practices, and cultural openness to change. Identify potential skill gaps and areas where organizational structures might conflict with desired architectural outcomes (Conway's Law).

- Stakeholder Alignment: Engage C-level executives, product owners, and engineering leaders to gain a shared understanding of the problem, the proposed strategic direction, and the expected benefits.

Phase 1: Planning and Architecture

With a clear understanding of the landscape, this phase focuses on designing the target architecture and formulating a detailed plan.

- Domain Modeling (DDD): Deep dive into the identified business domains. Use techniques like Event Storming to collaboratively define the Ubiquitous Language, identify Aggregates, Entities, Value Objects, and precisely delineate Bounded Contexts. Design the core domain model for each context.

- Architectural Design: Select the most appropriate advanced patterns (e.g., Clean Architecture for internal structure, Microservices for decomposition, EDA for integration) based on the discovery phase. Create high-level architectural diagrams, data flow diagrams, and component interaction models. Define service boundaries, APIs, and communication protocols.

- Technology Stack Selection: Choose specific technologies, frameworks, and tools that align with the architectural patterns and organizational capabilities. This includes cloud services, programming languages, databases, and messaging systems.

- Detailed Implementation Plan: Break down the architectural vision into smaller, manageable increments. Define epics and user stories. Outline the development roadmap, resource allocation, and timelines.

- Security and Compliance Design: Integrate security considerations from the outset. Define authentication, authorization, data encryption, and audit logging mechanisms. Ensure the design meets all relevant regulatory requirements.

- Documentation and Approvals: Formalize architectural decisions in design documents (e.g., Architecture Decision Records - ADRs). Secure approvals from key stakeholders, ensuring alignment and buy-in.

Phase 2: Pilot Implementation

Starting small is crucial for validating architectural choices and learning from early experience before scaling. This phase focuses on a minimal viable implementation.

- Select a Vertical Slice: Choose a small, non-critical but representative business capability or Bounded Context for the pilot. This could be a new feature or a small part of a legacy system targeted by the Strangler Fig pattern.

- Build a Foundational Component: Implement one or two core services or modules using the chosen advanced patterns (e.g., one Clean Architecture application, one Event Sourcing aggregate). Focus on establishing the foundational plumbing and infrastructure.

- Establish CI/CD Pipeline: Set up automated build, test, and deployment pipelines for the pilot components. This validates the DevOps tooling and processes.

- Implement Observability: Integrate monitoring, logging, and tracing for the pilot. This is critical for understanding behavior in a distributed environment.

- Validate Key Assumptions: Use the pilot to test performance, scalability, security, and developer experience. Does the chosen database work as expected? Is the messaging system performing adequately?

- Gather Feedback: Collect continuous feedback from the development team, operations team, and product owners on the new patterns, tools, and processes.

Phase 3: Iterative Rollout

Building on the lessons learned from the pilot, this phase involves incrementally expanding the implementation across the organization, typically through an agile, iterative approach.

- Feature-Driven Development: Prioritize business features and deliver them in small, incremental releases. Each iteration builds upon the established architectural patterns and infrastructure.

- Team Expansion and Training: Gradually onboard more teams, providing necessary training and support on the new patterns and technologies. Establish communities of practice for knowledge sharing.

- Migration Strategy (Strangler Fig): If dealing with a monolith, continue to identify and "strangle" functionalities, moving them into new services built with advanced patterns. Prioritize areas with high business value or high technical debt.

- Infrastructure Scaling: Continuously scale the underlying infrastructure (cloud resources, databases, messaging systems) to support the growing number of services and increasing load.

- Automated Testing and Quality Assurance: Maintain a high level of automated testing (unit, integration, end-to-end) to ensure code quality and prevent regressions across the expanding system.

- Regular Reviews: Conduct regular architectural reviews, code reviews, and post-mortem analyses to ensure adherence to design principles and continuous improvement.

Phase 4: Optimization and Tuning

Once components are deployed and in production, continuous optimization is essential to ensure they meet performance, cost, and reliability targets.

- Performance Profiling: Use profiling tools to identify bottlenecks in code, database queries, and network communication. Optimize critical paths.

- Cost Optimization (FinOps): Regularly review cloud spending and identify opportunities for cost savings (e.g., rightsizing instances, reserved instances, optimizing serverless function execution).

- Resource Tuning: Adjust resource allocations (CPU, memory) for services, databases, and message brokers based on actual usage patterns and load tests.

- Query Optimization: Tune database queries, add/remove indexes, and consider alternative data storage solutions (e.g., read models for CQRS) for performance-critical operations.

- Caching Strategies: Implement and refine caching mechanisms at various layers (client, CDN, API Gateway, application, database) to reduce latency and load.

- Resilience Testing: Conduct chaos engineering experiments to proactively identify and fix weaknesses in the system under failure conditions.

Phase 5: Full Integration

The final phase solidifies the new architectural patterns as the standard way of working, making them an integral part of the organization's technological fabric.

- Decommissioning Legacy Systems: Fully decommission old monolithic components or systems that have been successfully "strangled" and replaced. This reduces maintenance burden and operational costs.

- Standardization and Best Practices: Document and institutionalize the new architectural patterns, coding standards, and operational procedures. Establish clear guidelines for future development.

- Knowledge Transfer and Mentorship: Ensure knowledge is widely distributed within the organization. Establish mentorship programs to onboard new hires and upskill existing staff on advanced patterns.

- Platformization: Mature the internal developer platform (IDP) to provide self-service capabilities for creating and managing services built with the new architectural patterns, further accelerating development.

- Continuous Evolution: Recognize that architecture is never "done." Establish mechanisms for continuous architectural review, adaptation to new technologies, and iterative refinement of the patterns in use.

Best Practices and Design Patterns

This section delves into the five essential advanced patterns that form the core of this handbook. Each pattern addresses a critical challenge in modern software engineering, offering a proven, strategic approach to building complex, resilient, and scalable systems. Understanding when, why, and how to apply these patterns is fundamental for architects and lead engineers.

Architectural Pattern A: Domain-Driven Design (DDD) with Tactical Patterns

When and How to Use It: Domain-Driven Design (DDD), championed by Eric Evans, is a software development approach that places the primary focus on the core business domain and its logic. It is particularly effective for complex systems where the business rules are intricate, highly nuanced, and subject to frequent change. DDD emphasizes collaboration between domain experts and technical teams to create a shared understanding—the Ubiquitous Language—and a rich, expressive domain model. DDD is less about specific technologies and more about a mindset and a set of principles for managing complexity in the problem space.

Core Concepts and Tactical Patterns:

- Ubiquitous Language: A common, precise language shared by domain experts and developers, used consistently in all discussions, documentation, and code. This prevents miscommunication and ensures that the software accurately reflects the business domain.

- Bounded Context: The most critical strategic pattern in DDD. It defines a logical boundary within which a particular domain model is consistently applied. Each Bounded Context has its own Ubiquitous Language, and its model is internally consistent. Interactions between Bounded Contexts are explicit and managed, often through well-defined APIs or events, preventing model leakage and ensuring clear separation of concerns. For example, a "Customer" in a Sales Bounded Context might be different from a "Customer" in a Support Bounded Context.

-

Entities: Objects defined by their identity, not by their attributes. An Entity has a unique identifier that remains constant over time, even if its attributes change (e.g., a

Customerwith a uniquecustomerId). -

Value Objects: Objects defined by their attributes, not by their identity. They are immutable and represent descriptive aspects of the domain (e.g., a

Moneyobject withamountandcurrency). Value Objects enhance clarity and prevent subtle bugs. - Aggregates: Clusters of Entities and Value Objects treated as a single unit for data changes. An Aggregate has a single Aggregate Root, which is an Entity responsible for maintaining the consistency of the entire cluster. All external access to the Aggregate must go through its Root, enforcing invariants. This is critical for transactional consistency.

- Domain Services: Operations that involve multiple Aggregates or Entities and don't naturally fit within a single Entity or Value Object. They encapsulate business logic that spans multiple domain objects.

- Repositories: Provide a mechanism for encapsulating the storage, retrieval, and search behavior of Aggregates. They abstract away database specifics, allowing the domain model to remain persistence-agnostic.

Architectural Pattern B: Clean Architecture (Ports & Adapters)

When and How to Use It: Clean Architecture (also known as Hexagonal Architecture or Ports and Adapters, Onion Architecture) is an architectural pattern that emphasizes separation of concerns by creating concentric layers, where dependencies flow inward. It ensures that the core business logic (the "domain" or "entities") remains independent of external concerns like databases, UI frameworks, and external services. This independence makes the system highly testable, maintainable, and adaptable to changes in external technologies.

Core Concepts:

- Independence from Frameworks: The architecture should not depend on the existence of some library of feature-laden software. Frameworks are tools, not dictators.

- Testability: The business rules can be tested without the UI, database, web server, or any other external element.

- Independence from UI: The UI can change easily, without changing the rest of the system.

- Independence from Database: You can swap out your database (SQL, NoSQL, in-memory) without changing your business rules.

- Independence from any external agency: Your business rules simply don't know anything about the outside world.

- Frameworks & Drivers (Presentation/Infrastructure): Contains web frameworks (Spring Boot, ASP.NET Core), UI libraries (React, Angular), databases (SQL, NoSQL), message brokers, external APIs. These are "adapters" that convert external data formats into formats usable by the application and vice versa.

- Interface Adapters (Application Layer): Contains presenters, controllers, gateways, and DTOs (Data Transfer Objects). This layer adapts data from the outermost layer (e.g., HTTP requests) into a format suitable for the Use Cases layer and vice versa. It also defines "ports" (interfaces) that the Use Cases layer will implement (e.g., input ports for commands) and that the Infrastructure layer will implement (e.g., output ports for persistence).

- Use Cases (Application Logic): Contains the application-specific business rules. These are orchestrators that define how the application responds to user input, interacts with the domain, and coordinates the flow of data. They implement the input ports defined in the Interface Adapters layer and call on the output ports (interfaces) defined for the Domain Entities.

- Entities (Domain/Business Rules): The innermost layer, containing the enterprise-wide business rules. These are the core domain objects (Entities and Value Objects from DDD) that encapsulate the most fundamental and stable business logic. This layer has no dependencies on any other layer.

Architectural Pattern C: Event-Driven Architecture (EDA) with Event Sourcing & CQRS

When and How to Use It: Event-Driven Architecture (EDA) is a paradigm centered around the production, detection, consumption, and reaction to events. It promotes loose coupling, high scalability, and enhanced resilience, making it ideal for distributed systems, real-time data processing, and complex integration scenarios. When combined with Event Sourcing and Command Query Responsibility Segregation (CQRS), it offers a powerful approach to data management and consistency in highly dynamic environments.

Core Concepts:

-

Event: An immutable record of something that has happened in the past. Events are facts (e.g.,

OrderPlaced,PaymentReceived,CustomerDeactivated). They are broadcasted to interested consumers. - Event Producer (Publisher): A component that detects a change in its state and publishes an event to a message broker.

- Event Consumer (Subscriber): A component that subscribes to events from the message broker and reacts to them by updating its own state or triggering further actions.

- Message Broker: A middleware (e.g., Apache Kafka, RabbitMQ, AWS SQS/SNS, Google Pub/Sub) that facilitates asynchronous communication between producers and consumers, providing decoupling and often persistence for events.

- Benefits: Provides a complete audit trail, enables temporal queries (e.g., "What was the state of the order at 3 PM yesterday?"), simplifies debugging, and is a natural fit for EDA. It also solves the "impedance mismatch" between domain events and persistence.

- Considerations: Requires specialized event store (e.g., Event Store DB, Kafka as event log), complicates querying the current state directly, and can lead to performance issues if event streams are very long and need frequent full replays.

- Command Side: Handles commands (requests to change state), validates them, and typically uses Event Sourcing to persist changes as events. Optimized for writes and transactional consistency.

- Query Side (Read Model): Consumes events from the command side (or an event store) and projects them into denormalized, read-optimized data stores (e.g., relational database, NoSQL document store, search index). Optimized for reads and eventual consistency.

- Benefits: Enables independent scaling of read and write workloads, simplifies complex queries, allows for tailored data models for different clients, and enhances performance.

- Considerations: Introduces eventual consistency, adds architectural complexity (managing two distinct models, synchronizing data), and requires careful handling of data synchronization between command and query sides.

Architectural Pattern D: Microservices and Distributed Saga Pattern

When and How to Use It: Microservices Architecture is an architectural style that structures an application as a collection of loosely coupled services. Each service is independently deployable, owned by a small, autonomous team, and communicates with others via lightweight mechanisms (often APIs or message brokers). It's ideal for large, complex applications that require high scalability, agility, and resilience, and for organizations that can structure their teams according to Conway's Law.

Core Concepts:

- Service Decomposition: Breaking down a monolithic application into smaller, focused services, often aligned with Bounded Contexts from DDD.

- Independent Deployment: Each service can be built, tested, and deployed independently of others.

- Decentralized Data Management: Each service typically owns its data store, avoiding shared databases that lead to tight coupling.

- Communication: Services communicate synchronously (e.g., REST, gRPC) or asynchronously (e.g., message queues, event streams).

- Resilience Patterns: Circuit Breaker, Bulkhead, Retry, Timeout, Fallback are crucial for handling failures in a distributed environment.

- API Gateway: A single entry point for all client requests, routing them to the appropriate microservice, and handling cross-cutting concerns like authentication, rate limiting, and caching.

- Service Discovery: Mechanisms for services to find and communicate with each other (e.g., Kubernetes DNS, Consul, Eureka).

Distributed Saga Pattern: A Saga is a sequence of local transactions, where each local transaction updates data within a single service and publishes an event to trigger the next local transaction in the saga. If a local transaction fails, the saga executes a series of compensating transactions to undo the changes made by preceding local transactions, restoring the system to a consistent state.

-

Orchestration Saga: A centralized orchestrator (a dedicated service or workflow engine) explicitly manages the sequence of steps and invokes participant services. It sends commands to participants and processes their responses/events.

- Benefits: Easier to implement and manage, especially for complex workflows, as the logic is centralized.

- Considerations: Centralized orchestrator can become a single point of failure or a bottleneck; increased coupling between orchestrator and participants.

-

Choreography Saga: Each service involved in the saga produces events that trigger the next service in the sequence. There is no central orchestrator; services implicitly know about each other's events.

- Benefits: Highly decoupled, more resilient, and simpler to add new steps.

- Considerations: Can be harder to understand the overall flow, debug, and manage complex compensating transactions; potential for circular dependencies if not carefully designed.

Architectural Pattern E: Strangler Fig Application Pattern

When and How to Use It: The Strangler Fig Application pattern, named by Martin Fowler, is a powerful architectural pattern for incrementally refactoring a monolithic application by gradually replacing its functionalities with new services. It's an ideal strategy for modernizing large, complex, and business-critical legacy systems that cannot be simply rewritten from scratch due to their size, cost, or risk. The pattern allows for a safe, iterative migration, minimizing disruption to ongoing business operations.

Core Concept:

The pattern works by placing a new, modern application (the "strangler") in front of the old monolithic application. As new functionalities are developed or existing ones are refactored, they are implemented in the new application. The strangler then intercepts requests, routing them either to the new services or, for still-unrefactored functionalities, to the old monolith. Over time, more and more functionalities are "strangled" out of the monolith and into the new system, until eventually the monolith withers away and can be decommissioned.

Implementation Steps:

- Identify a Vertical Slice: Choose a small, self-contained business capability within the monolith that can be extracted or rewritten. This should ideally be a low-risk, high-value area to demonstrate early success.

- Implement the Facade/Proxy: Introduce a new component (e.g., an API Gateway, a reverse proxy, or a dedicated strangler service) that sits between the client (UI, other systems) and the existing monolith. This component is responsible for routing requests.

- Build New Functionality: Develop the chosen vertical slice as a new, independent service (e.g., a microservice or a component within a Clean Architecture application), using modern technologies and advanced patterns.

- Redirect Traffic: Configure the facade/proxy to route requests for the newly implemented functionality to the new service. All other requests continue to go to the monolith.

- Iterate and Expand: Repeat the process, incrementally migrating more functionalities from the monolith to new services. Each migrated piece effectively "strangles" a part of the old system.

- Data Migration/Synchronization: As functionalities move, data ownership might shift. Strategies for data migration (e.g., dual writes, data replication, event-driven synchronization) must be carefully managed to ensure consistency between the old and new systems during the transition.

- Decommission the Monolith: Once all functionalities have been migrated and the monolith is no longer serving any requests, it can be safely decommissioned.

Code Organization Strategies

Beyond architectural patterns, effective code organization within services and applications is crucial for maintainability and team productivity.

- Feature-Based Organization: Group code by business feature rather than by technical type (e.g., all code related to "Order Management" in one folder, rather than all "Controllers" in one folder). This aligns with DDD's focus on business capabilities.

- Layered Structure within Services: Even within a single microservice or Bounded Context, apply layered principles (e.g., Clean Architecture's layers: Domain, Application, Infrastructure).

- Modularity with Packages/Modules: Use language-specific package or module systems to create clear internal boundaries, enforcing separation of concerns and preventing accidental coupling.

- Consistent Naming Conventions: Adhere to clear, consistent naming conventions for files, classes, methods, and variables, ideally reflecting the Ubiquitous Language.

- Small, Focused Classes and Functions: Adhere to SRP. Keep classes and methods small, each with a single, clear responsibility, enhancing readability and testability.

Configuration Management

Treating configuration as code is a best practice that brings consistency, version control, and automation to environmental settings.

- Externalized Configuration: Separate configuration from code. Use environment variables, configuration files (e.g., YAML, JSON), or dedicated configuration services (e.g., HashiCorp Consul, Spring Cloud Config, AWS Parameter Store) to manage application settings.

- Version Control for Configuration: Store configuration files in Git repositories alongside application code, enabling versioning, auditing, and rollbacks.

- Environment-Specific Configuration: Manage different configurations for development, staging, and production environments, often using profiles or overlays.

- Secrets Management: Use dedicated secrets management services (e.g., HashiCorp Vault, AWS Secrets Manager, Azure Key Vault) for sensitive information like database credentials and API keys, rather than hardcoding or storing them in plain text.

- Dynamic Configuration: Implement mechanisms for applications to dynamically reload configuration changes without requiring a restart, crucial for highly available systems.

Testing Strategies

Comprehensive testing is non-negotiable for systems built with advanced patterns, where distributed complexity can make debugging challenging.

- Unit Testing: Focus on individual components (classes, functions) in isolation. Clean Architecture, with its decoupled domain and application layers, greatly facilitates unit testing of business logic.

- Integration Testing: Verify the interaction between different components within a service or between services (e.g., database integration, API calls to other services). For microservices, this often involves testing consumer-driven contracts.

- End-to-End (E2E) Testing: Simulate user journeys through the entire system, covering multiple services and UI interactions. These are often slower and more brittle but provide confidence in the overall system.

- Contract Testing: For microservices, consumer-driven contract (CDC) testing ensures that services adhere to their APIs without needing full E2E tests for every interaction. Tools like Pact are commonly used.

- Performance Testing: Load testing, stress testing, and scalability testing to ensure the system meets NFRs under expected and peak loads.

- Security Testing: Static Application Security Testing (SAST), Dynamic Application Security Testing (DAST), penetration testing, and vulnerability scanning.

- Chaos Engineering: Proactively inject failures into the system (e.g., network latency, service outages) to test its resilience and identify weaknesses. This is crucial for validating the effectiveness of resilience patterns in microservices and EDA.

Documentation Standards

In complex, distributed systems, high-quality, up-to-date documentation is as critical as the code itself.

- Architecture Decision Records (ADRs): Document significant architectural decisions, their context, alternatives considered, and the chosen solution. This provides a valuable history and rationale for architectural evolution.

- API Documentation: Use tools like OpenAPI/Swagger to define and document REST APIs, providing clear contracts for service consumers.

- Domain Model Documentation: Document the Ubiquitous Language, Bounded Contexts, Aggregates, and key Entities from DDD. This can be done through diagrams, glossaries, and narrative descriptions.

- System Context Diagrams: High-level diagrams showing the system's boundaries and its interactions with external systems and users.

- Container/Service Manifests: Document deployment configurations (e.g., Kubernetes YAML files) and environmental setup.

- Runbooks and Playbooks: Operational documentation for common procedures, troubleshooting guides, and incident response.

- "Living" Documentation: Strive for documentation that is automatically generated from code or tests (e.g., BDD scenarios) or kept in close proximity to the code, reducing the burden of manual updates.

Common Pitfalls and Anti-Patterns

While advanced design patterns offer powerful solutions, their misapplication or the emergence of anti-patterns can lead to systems that are more complex, fragile, and costly than the problems they were intended to solve. Understanding these pitfalls is crucial for successful implementation.

Architectural Anti-Pattern A: The Distributed Monolith

Description: The Distributed Monolith is a microservices anti-pattern where an application is decomposed into multiple services, but these services remain tightly coupled, often sharing a single database, communicating synchronously with high inter-service dependencies, or requiring coordinated deployments. It exhibits the complexities of a distributed system (network latency, partial failures) without gaining the benefits of independent deployability, scalability, or autonomy.

Symptoms:

- Frequent "big-bang" deployments across multiple services.

- Shared database schemas or direct database access across services.

- High volume of synchronous API calls between services, leading to long request chains.

- Changes in one service frequently break others.

- Difficulty in scaling individual services independently.

- Teams waiting on each other for deployments or database changes.

- Strong Bounded Contexts (DDD): Re-evaluate service boundaries using DDD principles to ensure each service encapsulates a truly independent business capability with its own data ownership.

- Asynchronous Communication (EDA): Prioritize event-driven communication over synchronous API calls, especially for critical business flows, using message brokers to decouple services.

- Independent Data Stores: Ensure each microservice owns its data and exposes data only via well-defined APIs or events, avoiding direct database access by other services.

- Consumer-Driven Contracts: Implement contract testing to manage explicit agreements between services, preventing breaking changes.

- Organizational Alignment: Restructure teams to align with service boundaries (Conway's Law) to foster autonomy and reduce inter-team dependencies.

Architectural Anti-Pattern B: Anemic Domain Model

Description: An Anemic Domain Model is an anti-pattern, particularly prevalent when attempting Domain-Driven Design, where the domain objects (Entities and Value Objects) contain only data (getters/setters) and no business logic or behavior. All the business logic is instead placed in "service" objects (e.g., OrderService, CustomerService), which then operate on these data-only domain objects. This effectively turns the rich domain model into a mere data structure, defeating the purpose of DDD.

Symptoms:

- Domain objects (e.g.,

Order,Product) have many getters and setters but no methods that encapsulate domain-specific behavior (e.g.,order.calculateTotalPrice(),product.applyDiscount()). - "Service" classes (e.g.,

OrderService) become large, complex, and filled with business logic that should arguably reside within the domain objects themselves. - Difficulty in enforcing invariants and consistency rules, as they are scattered across various service methods rather than being encapsulated within Aggregates.

- Loss of the Ubiquitous Language in the code, as behavior is separated from the data it operates on.

-

Encapsulate Behavior: Move business logic directly into the domain objects (Entities and Value Objects). For example, an

Orderobject should have methods likeaddItem(),removeItem(),confirm(), which encapsulate the rules for these operations. - Use Aggregates Correctly: Ensure that Aggregates truly encapsulate their internal state and expose behavior through their Aggregate Root, thereby enforcing transactional consistency and invariants.

- Distinguish Domain Services from Application Services: Domain Services should only contain logic that genuinely involves multiple Aggregates or does not naturally belong to a single domain object. Application Services (part of Clean Architecture's Use Cases layer) orchestrate domain objects and interact with infrastructure, but they should not contain core domain logic.

- Focus on Ubiquitous Language: Ensure that the behavior modeled in the code accurately reflects the language and concepts used by domain experts, with behavior residing where the domain experts expect it.

Process Anti-Patterns

Beyond technical architecture, organizational processes can also become anti-patterns that hinder success.

-

Big Bang Release: Attempting to deploy a massive architectural change or a new system all at once. This maximizes risk, increases the potential for catastrophic failure, and delays feedback.

- Solution: Adopt iterative, incremental rollout strategies (like Strangler Fig), continuous delivery, and A/B testing.

-

Hero Culture: Relying on a few "heroes" or highly specialized individuals to fix all problems or understand all complex parts of the system. This creates single points of failure, burnout, and knowledge silos.

- Solution: Foster collective code ownership, invest in cross-training, document extensively, and establish communities of practice.

-

Analysis Paralysis: Spending excessive time on planning and architectural design without moving to implementation or validation through PoCs. Fear of making a wrong decision leads to no decision.

- Solution: Embrace evolutionary architecture, timebox design phases, prioritize PoCs, and accept that some decisions will be refined over time.

-

Ivory Tower Architecture: Architects design systems in isolation without consulting development teams or understanding implementation realities, leading to impractical or unmaintainable designs.

- Solution: Foster collaborative architecture, involve developers in design decisions, conduct regular code reviews, and emphasize architectural guidance over dictation.

Cultural Anti-Patterns

Organizational culture plays a significant role in the success or failure of adopting advanced patterns.

-

Blame Culture: When failures occur, the focus is on finding fault rather than learning from mistakes and improving processes. This stifles innovation and psychological safety.

- Solution: Implement blameless post-mortems, foster a culture of continuous learning, and promote transparency.

-

Siloed Teams: Lack of collaboration and communication between different functional teams (e.g., Dev, Ops, QA, Security). This is particularly detrimental to microservices and DevOps.

- Solution: Implement Team Topologies (stream-aligned, platform, enabling, complicated-subsystem teams), foster cross-functional collaboration, and emphasize shared goals.

-

Resistance to Change: An organizational reluctance to adopt new technologies, processes, or ways of working, often due to comfort with the status quo or fear of the unknown.

- Solution: Strong leadership sponsorship, clear communication of benefits, incremental adoption, training, and celebrating early successes.

-

"Not Invented Here" Syndrome: A bias against ideas, products, or knowledge originating from outside one's own team or organization. This hinders adoption of best practices and open-source solutions.

- Solution: Promote knowledge sharing, encourage participation in open-source communities, and focus on objective evaluation of solutions.

The Top 10 Mistakes to Avoid

- Adopting Microservices without Strong DDD: Decomposing a monolith without clear domain boundaries leads to a distributed monolith.

- Ignoring Observability in Distributed Systems: Blindly deploying microservices or EDA without robust logging, monitoring, and tracing makes debugging impossible.

- Trying Event Sourcing/CQRS on a Simple CRUD App: Over-engineering for simple problems adds unnecessary complexity and overhead.

- Lack of Automated Testing: Skipping comprehensive testing (especially integration and contract testing) in distributed systems ensures fragility.

- Underestimating Operational Complexity: Advanced patterns require mature DevOps, automation, and incident response capabilities.

- Neglecting Data Consistency in EDA/Microservices: Failing to properly handle eventual consistency or distributed transactions (e.g., Saga) leads to data integrity issues.

- Ignoring Conway's Law: Trying to force a microservices architecture onto a monolithic organization structure.

- Skipping PoCs for Complex Patterns: Jumping straight into production with new, unproven architectural choices.

- Allowing Anemic Domain Models in Complex Domains: Losing business logic within data-only domain objects, especially when attempting DDD.

- Big Rewrite Instead of Strangler Fig for Legacy Systems: Attempting to replace a large monolith in one go, incurring massive risk and cost.

Real-World Case Studies