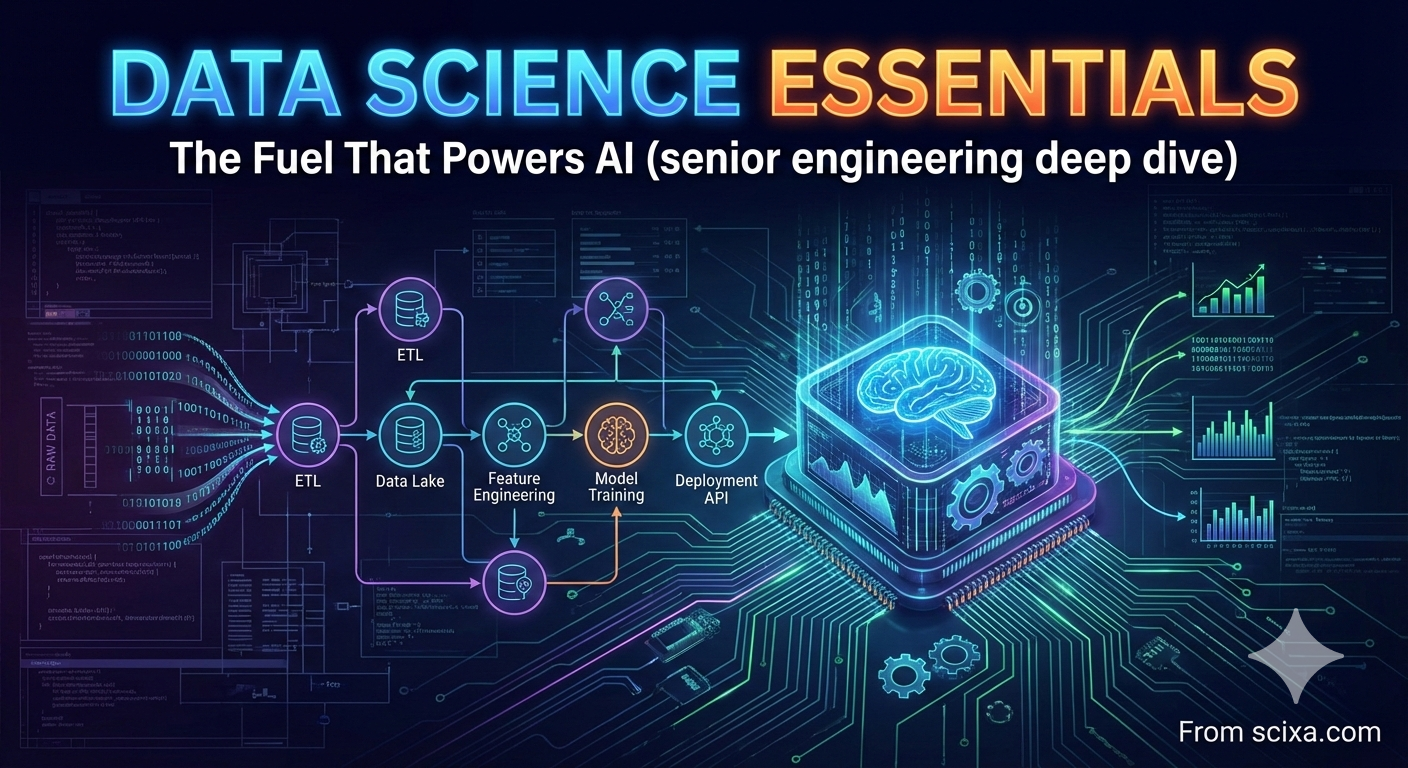

⚡ Data Science Essentials: The Fuel That Powers AI (senior engineering deep dive)

An exhaustive technical reference on the engineering of data-centric AI — from statistical foundations to production-grade MLOps, with rigorous treatment of tradeoffs, failure modes, and diagnostics.

🎯 Learning objectives & prerequisites

- Model data as a stochastic process & design robust ingestion pipelines.

- Analyze bias/variance, feature interactions, and generalization bounds.

- Implement distributed training, monitoring, and concept drift detection.

- Debug silent failures, data leakage, and scalability bottlenecks.

- Assumed: graduate-level linear algebra, probability, Python, and basic ML.

1. Foundational theory: data generating processes

Every dataset is a finite sample from an unknown distribution

We define empirical risk

2. Core ingestion & validation engineering

Production data pipelines must handle schema evolution, late-arriving data, and idempotency. We use exactly-once semantics via Kafka transactions or Spark structured streaming with write-ahead logs. Data quality dimensions: completeness, conformity, consistency, accuracy, and integrity. Automated validation with Deequ (on Spark) computes metrics like completeness, uniqueness, histogram.

Distributional checks: For numerical columns, track mean/std dev over sliding windows; flag if >3σ shift. For categorical, use chi-square test or PSI (population stability index). PSI > 0.2 indicates significant shift.

2.1 Schema contracts and compatibility

Avro / Protobuf with backward/forward compatibility: adding optional fields is safe; removing required fields breaks consumers. Use a schema registry (Confluent) to enforce compatibility. In feature stores (Feast, Hopsworks), point-in-time correctness ensures training data doesn't leak future information.

In stream processing (Flink, Kafka Streams), event time vs processing time. Watermark = threshold for out-oforderness. If watermark = 10s, any event with timestamp < current watermark is dropped or sent to side output. This trades latency for completeness. For ML, we often need retraining on complete windows; use allowed lateness and update accumulative models.

3. Advanced feature engineering & representation learning

Feature engineering remains the differentiator in tabular domains. We cover feature hashing (hashing trick) to handle high-cardinality categoricals:

Feature selection: filter (mutual information, ANOVA), wrapper (RFE, boruta), embedded (L1, tree importance). For high dimensions, use cross-validation stability to avoid overfitting. Dimensionality reduction: PCA (linear), UMAP/t-SNE (nonlinear) for visualization, but beware of information loss.

3.1 Automated feature engineering

Libraries like Featuretools apply deep feature synthesis: stacking transformations (max, min, avg, trend) across relationships. However, combinatorial explosion leads to thousands of features; use feature pruning based on importance.

4. Algorithmic internals: optimization & neural architectures

We examine gradient descent variants: SGD with momentum (Nesterov), Adam (adaptive moments), and RMSProp. Adam update:

Backprop through time for RNNs: unfolded computational graph; gradient clipping avoids explosion. Attention mechanisms: scaled dot-product attention

| Model | Training complexity | Inference complexity | Memory (params) |

|---|---|---|---|

| Linear regression (closed form) | |||

| Random forest (n_trees, depth d) | |||

| Transformer (L layers, h heads) |

5. Architectural tradeoffs: training/serving & reproducibility

Reproducibility debt: pin library versions (conda lock, poetry), record dataset hash, log hyperparameters. Use MLflow or Kubeflow. For distributed training, all-reduce with ring algorithm: bandwidth optimal. Parameter server vs synchronous training: sync has deterministic convergence but slow stragglers; async faster but may diverge.

5.1 Model serving patterns

Online: REST/GRPC endpoints with preloaded model (TF Serving, TorchServe). Batch: offline predictions on Spark with model broadcast. Use model ensembles with canary deployments and A/B tests. For edge devices, quantize to int8 (TensorRT, CoreML) and prune unimportant weights.

6. Exhaustive taxonomy of failure modes

- Silent data corruption: bit flips, partial writes. Use checksums (CRC32) and data validation before training.

- Feedback loop bias: model predictions influence future data (e.g., recommendation systems). Leads to echo chamber. Mitigation: inject exploration, use unbiased offline evaluation.

- Target leakage: feature includes information from future. Common in time series: using tomorrow's value as feature. Solution: rigorous temporal splitting.

- Imbalanced classes and asymmetric costs: Focal loss, weighted loss, or resampling (SMOTE). But oversampling may cause overfitting.

- Adversarial attacks: small perturbations cause misclassification. Defend with adversarial training (PGD) or input preprocessing (bit depth reduction).

7. Security & privacy in ML systems

Differential privacy (DP) adds noise to gradients or outputs. For

8. Performance engineering & optimization

GPU utilization: ensure data loading is not bottleneck (use tf.data, DALI). Overlap compute and transfer via CUDA streams. Mixed precision training (FP16) with loss scaling gives ~2x speedup. For CPU, use vectorized instructions (AVX) and intel MKL. Distributed: gradient compression (TopK, QSGD) reduces communication.

9. Systematic diagnostics & interpretability

Residual analysis: plot residuals vs predicted; if pattern exists, model is missing nonlinearity. SHAP values: additive feature attribution. For tree-based, TreeSHAP is fast. Monitor mean absolute SHAP per feature over time to detect drift. Contrastive explanations (what changes to flip prediction).

Gradient-based debugging: for neural nets, examine gradient norms per layer; if vanishing ( < 1e-7 ) use better init or skip connections. Dead ReLU: many units output zero; consider LeakyReLU.

10. Production implementation blueprint

We design a fraud detection pipeline: Kafka → Flink (aggregates) → feature store → model server (TensorFlow Serving) with shadow traffic. Monitoring: KS statistic on prediction distribution, average prediction drift, data quality alerts. Use A/B testing framework to compare model candidates. Store model signatures in MLMD (metadata store).

10.1 Code snippet: feature validation with Deequ

// Scala (Deequ)

val verificationResult = VerificationSuite()

.onData(data)

.addCheck(Check(CheckLevel.Warning, "fraud checks")

.hasSize(_ >= 1000000)

.hasMin("amount", _ == 0.0)

.hasMax("amount", _ <= 100000)

.hasCompleteness("txn_id", _ == 1.0)

.hasUniqueness("txn_id", _ == 1.0))

.run()11. Comparative analysis: batch, streaming, online learning

| Paradigm | Latency | Throughput | Model update | State management |

|---|---|---|---|---|

| Batch (Spark, BigQuery) | hours | very high | periodic retraining | stateless |

| Micro-batch (Structured Streaming) | seconds-minutes | high | mini-batch update | checkpoint |

| True streaming (Flink) | sub-second | medium | per-record update | rocksdb state |

| Online learning (River) | real-time | high per core | incremental | in-memory |

12. Technical debt and hidden feedback loops

ML systems have high maintenance cost: glue code, pipeline dependencies, configuration debt. Hidden feedback: if model influences business decisions (e.g., loan approval), the next training set becomes biased by rejections. Use reject inference techniques. Also, cascading failures: upstream data source changes schema silently; downstream models fail.

13. Advanced exercises & reasoning

13.1 Conceptual reasoning: explainability

You deploy a random forest credit model. After 6 months, default rates increase, but model AUC remains stable. What could be happening?

Solution: Calibration shift—model scores still rank order but probabilities are miscalibrated. Recalibrate using Platt scaling or isotonic regression on recent data.

13.2 Debugging scenario

A real-time ad CTR model shows prediction drop at midnight. The feature "hour_of_day" was not included, but traffic composition changes (more bots at night). How to debug? Solution: Segment performance by hour, check feature distributions across hours, add time features or traffic source features.

13.3 Applied challenge: feature store design

Design a feature store for a ride-sharing ETA model that uses real-time traffic and historical trip stats. Include point-in-time correctness, low-latency retrieval, and batch backfill. Discuss storage (Redis for online, Cassandra for historical) and orchestration (Airflow for materialization).

14. Compendium of best practices

- Data lineage: track origin, transformations, and version.

- Model validation: shadow mode, canary, A/B tests.

- Robustness: train with noise (data augmentation), use adversarial validation.

- Observability: log predictions, features, and metadata. Set up dashboards for drift and data quality.

15. Synthesis: engineering the fuel

Data science in production is an exercise in risk management: distributional stability, failure modes, and scalability. The discipline demands rigorous data validation, continuous monitoring, and a culture of reproducibility. Master these, and you build AI that thrives in the wild.

16. References & further study

“Designing Data-Intensive Applications” (Kleppmann); “ML Yearning” (Ng); “Reliable Machine Learning” (Google); “Dynamical Systems” for recurrent networks. NIPS/ICML papers on domain adaptation, fairness, and interpretability.

17. Causal reasoning and data science

Correlation is not causation; for interventions we need causal models. Directed acyclic graphs (DAGs), do-calculus, and propensity scoring. In recommendation, causal graphs avoid confounding. Uplift modeling estimates incremental impact.

18. Hyperparameter optimization at scale

Bayesian optimization (Gaussian processes), population-based training, Hyperband. Asynchronous successive halving (ASHA) for distributed tuning. Combine with early stopping to save resources.

19. Fairness constraints & bias mitigation

Demographic parity, equalized odds. Use disparate impact analysis, reweighting, or adversarial debiasing. Monitor subgroups for performance disparity.